Data do not give up their secrets easily. They must be tortured to confess.

Jeff Hopper, Bell Labs

The National Assessment for Educational Progress (NAEP) was again in the news recently. Slate published a video by the activist Campbell Brown in which she gave advice to the next president. She began by claiming that "two out of three 8th grade students in this country cannot read or do math at grade level." When asked how she came up with that figure, Brown cited NAEP, where roughly one-third of eighth-graders achieve "proficient."

Confusing "proficient" with "grade-level" is a common error. What made this uncommon was the vehemence with which Brown and her defenders stuck by her mistake, in spite of explanations by experts, including NAEP itself, that this was incorrect. Surely "proficient" has its everyday meaning, they insisted; those who claimed otherwise were "using bureaucratic jargon", "splitting hairs about definitions", or "wading in academic minutia."

NAEP has been around for a little more than four decades. It provides an enormous trove of data about K-12 education, across time and across the nation. While it's a wonderful tool, NAEP data (like its language) can easily be misunderstand--usually by those who don't realize that tests and their data are complicated.

There are actually two NAEPs. Since the 1970s, NAEP has administered a long-term assessment every few years to a sample of students, testing reading and mathematics at ages 9, 13, and 17. While the long-term assessments have changed slightly over the past four decades, their intended purpose is to make comparisons over time. Results are reported as scores (0-500) along with numerical levels. The Main NAEP began in 1990 and tests a sample of students in grades 4, 8, and 12 in a number of subjects, including reading and mathematics. Because frameworks for the Main NAEP change over time (reflecting changes in curricula) these tests are meant to make comparisons between locations rather than over time. Reporters and pundits regularly ignore this. The Main NAEP provides "standards based" results, assigning four different achievement levels--Advanced, Proficient, Basic, and Below-Basic. The levels and their meanings are assigned in an elaborate and somewhat arcane process.

Here is another example that illustrates the difficulty of understanding test results.

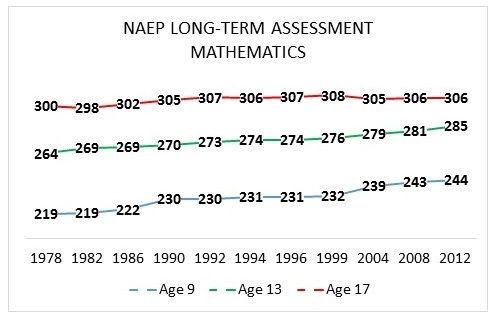

The NAEP Long-term Assessment in mathematics has been administered for more than three decades. The average scores (0-500 scale) for the three groups of students have increased over the years.

While average scores at ages 9 and 13 went up, by 25 and 21 points respectively, scores at age 17 rose only by 6. Pundits often summarize this by saying that progress made in lower grades "dissipated" by the time students reached high school. But the situation is not so simple.

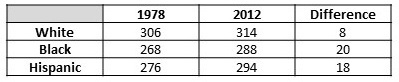

Let's start with more detail about those scores. Here are the average NAEP scores for White, Black, and Hispanic 17 year-olds.

How could average scores go up more for each separate demographic than for the whole? This turns out to be a well-known statistical phenomenon known as Simpson's Paradox. Exactly the same phenomenon occurred for SAT scores in the 1970s, which motivated the famous A Nation at Risk commission. This already changes the story. Black and Hispanic 17-year-olds made substantial gains (larger than those at ages 9 and 13).

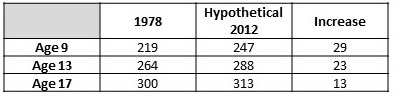

Why did scores for the whole group rise so little? Perhaps this represents a lack of progress, but at least part of the cause is the composition of the students sampled. In 1978, 83% of test-takers were white; in 2012, only 56% were. Moreover, while 17-year-olds are generally in grade 11, some are in grade 10 or below. The percentage of students below grade 11 rose from 15 to 23 during this time. In both cases, the composition shifted to students who on average score lower on NAEP. If in a hypothetical 2012 the composition of test-takers exactly matched that in 1978, and all else remained the same, the increase in average scores would have more than doubled. (Similar calculations for ages 9 and 13 result in smaller changes.) Here are the adjusted scores for all three levels.

So the "flat" scores for 17 year-olds are partly due to compositional effects. Not so simple after all.

But the rise in scores is still less for older students. Why? Claiming that "progress has dissipated" makes little sense since the three tests examine different skills. The cause is more likely connected to the nature of the tested material (which at age 17 is not only more challenging but also taught long before the test is administered), as well as the nature of 17-year-olds themselves (who are somewhat disengaged). Not so simple.

Many similarly deep questions arise for NAEP.

•While achievement gaps for Black and Hispanic students narrowed during this period, almost all the narrowing occurred prior to 1990? Why? What changed?

•What caused the gender gap to narrow?

•How did doubling the percentage of 13-year-olds taking algebra affect scores?

Such questions are simple to state, but each has a complicated answer. In any case, we are unlikely to find those answers if we insist that interpreting test results is simple, that using precise language is splitting hairs, and that employing statistics with care is merely wading in academic minutia.

Indeed, data do not give up their secrets so easily.