Why are some pollsters producing results among "likely voters" that vary, either over time or compared to other pollsters? A week ago, I offered a primer on how pollsters define and choose likely voters. Today, I want to consider whether the variation we are seeing is unusual, and if so, what may explain it.

The best example of this phenomenon can be found Gallup's release Monday of their latest results for the national "generic ballot" for Congress. For the second straight week, they found a remarkably negative result for Democrats when they screened for the most "likely voters." Using their traditional likely voter model that assumes a typical midterm turnout of 40% of adults, they found Republicans leading by a whopping 17-point margin (56% to 39%). Using a slightly looser screen that assumes a much-higher than usual 55% turnout, they show Republicans leading by an only slightly narrower, 12-point margin (53% to 41%).

Either way, the result is one of the best for the Republicans in Gallup's 60 years of tracking the national generic ballot, and if accurate, would foretell electoral armageddon for Democrats. According to the political science models, a margin of just five points for Republicans among likely voters on Gallup's final survey would predict a gain of roughly 50 seats, more than enough to attain a House majority. According to Emory University political scientist Alan Abramowitz, a Republican margin of 12 to 13 percentage points would translate into a Republican gain of roughly 80 seats, giving the GOP their biggest majority since the 1920s.

But the generic ballot results from other national polls of "likely voters" have not been nearly as pessimistic for the Democrats. A half-dozen independent media surveys conducted over the last two weeks have yielded narrower margins among "likely voters," ranging from a nine-point Republican lead to a two-point Democratic edge. If we use our interactive chart to select just the pollsters other than Gallup that have released recent results among "likely voters," it shows a five-point Republican lead (47% to 42%) on the generic ballot.

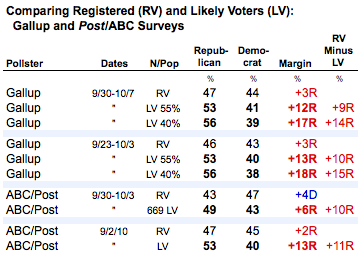

Why the difference? Let's start by looking at how much the results change on the same surveys when Gallup and ABC News/Washington Post narrow their samples from all registered voters to likely voters. The two organizations are the only national pollsters (so far) to report results for both populations. While their reported margins differ, both show similarly large shifts from registered to likely voters. On four polls, Republicans gain roughly 10 or more percentage points on the margin as these pollsters move from registered to likely voters.

What is the gap about? Part of it, as we know from decades of research including the biannual reports produced by the U.S. Census Current Population Survey, is that voters tend to be older, better educated, more rural and more white than the population at large, a pattern that is especially evident in lower turnout for off-year elections. Because these demographic groups tend to be more Republican, likely voter models that move poll samples toward a more correct demographic snapshot also nudge vote preference a few points more Republican. Such differences were typically in the single-digit range in 2004 (see examples here and here), and much narrower in 2006 (here and here) and 2008. This year, obviously, those gaps are much bigger and appear to be more variable across pollsters.

Of course, these bigger-than-usual differences are also a reflection of the huge gap in voter enthusiasm observed by various pollsters this year. Republicans have been much more likely to express enthusiasm about voting than Democrats, and that enthusiasm is translating into greater reported interest in the campaign and intent to vote -- two key questions that drive pollsters' likely voter models.

But does the inconsistency among pollsters suggest a new, emerging problem with likely voter screens? Are they simply not working as well this year as in the past? Tempting as it is to spin theories about how these gaps may result from the various new challenges to polling -- cell phones, lower response rates, new technologies and the like -- comparisons to elections like 2006 and 2008, when an enthusiasm gap favored Democrats and thus narrowed the typical differences between registered and likely voters, are misleading. We really need to look back to 1994, the last election in which enthusiasm and turnout skewed decisively Republican.

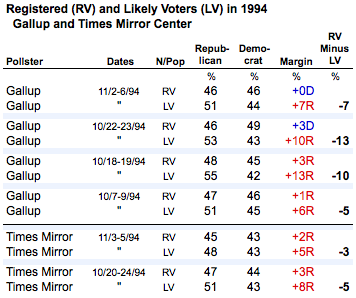

As it turns out, a search of reports from 1994 shows that Gallup's likely voter model produced similar gaps between likely and registered voters.

As the table above shows, Gallup (then in partnership with USA Today and CNN) conducted surveys in mid-October that showed Republicans with double-digit leads among likely voters and produced differences of of 10 to 13 points between registered and likely voters. But the surveys by the Times Mirror Center (which later became the Pew Research Center) found much narrower gaps. Ultimately, the Republican lead narrowed on the Gallup likely voter sample to 7 points, much closer to the final Times Mirror poll and in line with the actual popular vote cast for the House (52.1% for the Republicans, 45.2% for the Democrats).

In their release last week, Gallup noted that their likely voter polls have often overstated the margin for leading party in September and early October of off-year elections:

Gallup's historical election trends suggest that the race often tightens in the final month of the campaign. In September and October 1994, 2002, and 2006, Gallup's likely voter estimates showed larger margins for the leading party than what the final estimate showed (with the final poll in 2002 moving from a slight Democratic advantage to a Republican lead in the final poll).

My sense is that some of the big differences we have seen this year -- both in comparing Gallup's results to other national polls and on some polls at the state level -- may be about the way the classic likely voter model behaves in a year in which Republicans are generally more enthusiastic about voting than Democrats. The demographic pattern of enthusiasm reinforces the demographics of those already likely to vote, making the impact of the likely voter model on the overall result that much bigger.

In a typical year, a likely voter model or screen functions like a nudge. It gives polls samples a push toward the demographics of likely voters, and in so doing makes those samples slightly more Republican. That nudge makes them slightly more accurate in predicting the outcome.

With that in mind, consider this imperfect metaphor: In a typical year, the LV model is like giving a shopping cart a nudge on a level surface. It will roll a few inches, but no more. Years like 1994 and 2010, however, are like giving that same cart a nudge on an incline. Nudge it too much, and the cart will roll down the hill.

(To be fair, Gallup reported last week on the demographics of its new likely voter sample and found a "typical" composition by gender, age, race and education, but found that likely voters were far more Republican and conservative than in previous elections, including 1994).

It is also important to remember that the "science" of likely voter models derives from polls taken during the last week of the campaign. Over the years, pollsters have looked closely at their final polls before the election -- sometimes collecting actual respondent turnout data to see which voted and which did not -- to see what changes or alternative models would have more accurately predicted the outcome. The challenge, especially with models that rely on multiple-question indexes, is that their accuracy when applied to polls taken a month or more before the election is unknown.

So don't be surprised if Gallup's bigger than average generic ballot margin narrows over the next three weeks, and don't be surprised if similar differences in some statewide polls narrow as well.

I have more to say about the science of likely voter models and what pollsters can do to mitigate these big swings in likely voter demographic composition, but I will save that for another post. To be continued ...