WASHINGTON -- How did the polls do this year? And who was the most accurate pollster? While crowning a polling "winner" can be a dubious proposition on the day after the election, some immediate lessons are apparent: On average, the final statewide pre-election polls once again provided a largely unbiased measurement of the outcomes of most races, Congressional District polling had a slight Democratic skew, national polls that sampled both landline and cell phones measured national Congressional vote preference more accurately than those that sampled only landline phones and the venerable Gallup Poll took one on the chin.

I have never been a fan of the usual rush to crown "the most accurate" pollster on the morning after each election. Votes are still being counted and the final, certified results will likely change enough to alter whatever rankings based on error scores calculated at this hour. Moreover, as ABC News polling director Gary Langer puts it, polling estimates are not "akin to laser surgery" and its a fallacy to assume that "pinpoint accuracy" is attainable in pre-election polls. At best, the estimate produced by any given poll will vary within a predictable range of random variation. If ten polls all capture a result within that "margin of error," they have all performed as they should. Some will do slightly better than others, but on average, any given poll may win what Langer calls "the horse race lottery" and get the result exactly right by chance alone.

But even at this early hour, we can begin to identify polls whose horse race estimates have fallen outside the margin of error. For example, as of 6:35 a.m. Eastern time, the Associated Press had reported results from roughly 96% of the precincts in Congressional races nationwide. My tally of the district-level AP vote count shows that over 78 million voters chose Republican candidates for Congress over Democrats by a margin of 7.4 percentage points (52.1% to 44.7%) with a small percentage (3.2%) casting ballots for independent and third-party candidates.

The uncounted precincts statistic at this hour likely understates the number of uncounted early votes in Democratic states like California and Washington, so the final margin could be as much as a point narrower. In 2006, the initial national count gave Democrats a six-point advantage, but it grew to a 7.2% margin once all votes were counted.

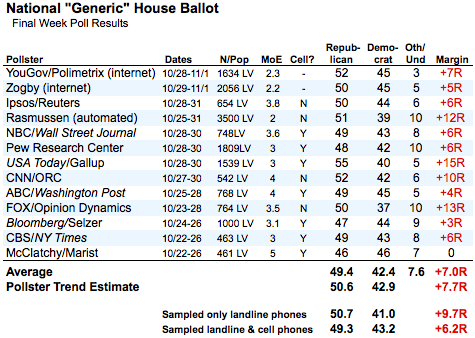

How did the final polls measuring the national "generic" vote preference compare to the ultimate Republican margin that will likely fall somewhere between six and seven percentage points? As the table below shows, the telephone surveys conducted by the Pew Research Center, Ipsos/Reuters, NBC/Wall Street Journal, CBS/New York Times and the two Internet-based surveys by YouGov/Polimetrix and Zogby Interactive all produced margins that are very close to the likely final result.

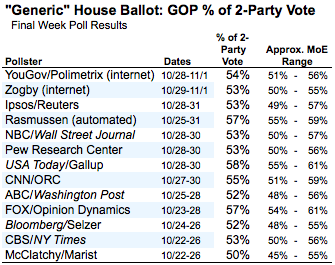

That said, if we factor out the undecided and calculate the Republican percentage of the two-party vote for each survey, most capture the likely final result (53%) within their respective margins of error (adjusted slightly to account for the missing undecided voters). The most prominent exception is the Gallup Poll. The Republican share of the two-party vote predicted by their survey (58%) will likely miss the actual result by five percentage points, falling just outside the expected range of variation (55% to 61%). Gallup's error on the margin will likely be its biggest since it started asking the generic vote question 60 years ago.

While a focus on individual polls can get dicey, averaging results across surveys -- which effectively pools their sample sizes -- begins to yield important lessons: For example, the average predicted margin among the seven surveys that sampled both landline and cell phones (+6.2% Republican) comes much closer to the likely final result than the average margin among the four surveys that sampled only landline phones (+9.7% Republican)

At the state level, polls were remarkably free of bias, on average, so our "trend estimates" predicted winners accurately in almost all states. The most notable exception was Nevada, where Harry Reid defeated Sharon Angle by more than five percentage (50.2% to 44.6%) points after trailing on our aggregate of all public polls by nearly three (48.8% to 46.0% as of my final update yesterday).

When we drill down to the accuracy of individual polls, we see that most of the statewide polls conducted in the last two to three weeks of the campaign captured the final results within their theoretical margins of sampling error. Based on the vote counted so far, we have examined 232 poll in contests with at least 95% of precincts counted, excluding those from Alaska, Arizona, California and Washington (states where large portions of early vote remains uncounted), and nearly all captured the actual results within their theoretical margins of error. In fact, the overall error rate as of this morning (2%) appears to be well below what we would expect by chance alone (5% -- although these calculations should be considered preliminary).

Overall, the final polling results at the statewide level were also remarkably unbiased. Errors in predicting the Democratic percentage of the two-party vote have so far averaged to near zero in Senate contests (-0.1%) and just slightly more (+0.2) in contests for governor. The average of the absolute value of the errors of the Democratic percentage of the two party vote -- a measure of how much polls tend to vary from the average -- was just 2.3%.

In polling in individual Congressional Districts, however, we did see a more significant statistical bias. On average the 55 House polls that completed interviewing after October 15 produced a nearly two-point average error (+1.8) favoring the Democratic candidates and slightly more average variation (3.3) than the statewide polls. If we expand our focus to all polls fielded in October, that bias (+2.2) and absolute error (3.5) grow slightly greater. The skew toward the Democrats in District level polling -- a level of bias we did not see in 2006 or 2008 -- explains why our seat projections understated the Republican gains by 13 seats.

These are very cursory first impressions based on votes still being counted. Moreover, these statistics tell us only about the polls conducted in the last few weeks of the campaign. "The last poll" is only part of the story. Fully evaluating the performance of polls in 2010 requires that we look back at some of the conflicting narratives set by results that were more often divergent in September and early October than in the final weeks.

We will have more on how specific pollsters, types of polls and our state and district level trend estimates performed as we get a more complete picture of the results over the next few days.

Stay tuned.

Follow Mark Blumenthal and HuffPost Pollster on Twitter