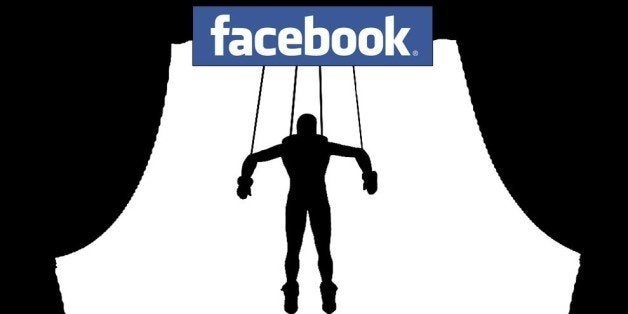

This weekend, the results of an experiment conducted by researchers and Facebook were released, creating a fierce debate over the ethics of the endeavor. The experiment involved 689,003 people on Facebook whose News Feed was adjusted to contain either more positive or more negative emotional content. The researchers were looking for whether this had an effect on these people's moods. And it did, albeit a small one. People exposed to more positive content had posts that were more positive, and those exposed to more negative content had posts that were more negative. This was measured by the types of words they used.

The experiment launched a fierce response from critics, some of whom decried it as unethical and creepy. In my view, it isn't productive to castigate Facebook or the researchers, as the problems here emerge from some very difficult unresolved issues that go far beyond this experiment and Facebook. I want to explore these issues, because I'm more interested in making progress on these issues than on casting stones.

Consent

A primary concern with the experiment concern was whether people subjected to the experiment gave the appropriate consent. People were deemed to have consented based on their agreement to Facebook's Data Use Policy, which states that Facebook "may use the information we receive about you... for internal operations, including troubleshooting, data analysis, testing, research and service improvement."

This is how consent is often obtained when companies want to use people's data. The problem with obtaining consent in this way is that people often rarely read the privacy policies or terms of use of a website. It is a pure fiction that a person really "agrees" with a policy such as this, yet we use this fiction all the time. For more about this point, see my article, "Privacy Self-Management and the Consent Dilemma," 126 Harvard Law Review 1880 (2013).

Contrast this form of obtaining consent with what is required by the federal Common Rule, which regulates federally-funded research on human subjects. The Common Rule requires as a general matter (subject to some exceptions) that the subjects of research provide informed consent. The researchers must get the approval of an institutional review boards (IRB). Because the researchers involved not just a person at Facebook, but also academics at Cornell and U.C. San Francisco, the academics did seek IRB approval, but that was granted based on the use of the data, not on the way it was collected.

Informed consent, required for research and in the healthcare context, is one of the strongest forms of consent the law requires. It is not enough simply to fail to check a box or fail to opt out. People must be informed of the risks and benefits and affirmatively agree.

The problem with the Facebook experiment is that it exposed the rather weak form of consent that exists in much of our online transactions. I'm not sure that informed consent is the cure-all, but it would certainly have been better than the much weaker form of consent involved with this experiment.

This story has put two conceptions of consent into stark contrast -- the informed consent required for much academic research on humans and the rather weak consent that is implied if a site has disclosed how it collects and uses data in a way that nobody reads or understands.

In Facebook's world of business, its method of obtaining consent was a very common one. In the academic world and in healthcare, however, the method of obtaining consent is more stringent. This clash has brought the issue of consent to the fore. What does it mean to obtain consent? Should the rules of the business world or academic/healthcare world govern?

Beyond this issue, the problems of consent go deeper. There are systemic difficulties in people making the appropriate cost-benefit analysis about whether to reveal data or agree to certain potential uses. In many cases, people don't know the potential future uses of their data or what other data it might be combined with, so they can't really assess the risks or benefits.

Privacy Expectations

Perhaps we should focus more on people's expectations than on consent. Michelle Meyer points out in a blog post that IRBs may waive the requirements to obtain informed consent if the research only involves "minimal risk" to people, which is a risk that isn't greater than risks "ordinarily encountered in daily life." She argues that the "IRB might plausibly have decided that since the subjects' environments, like those of all Facebook users, are constantly being manipulated by Facebook, the study's risks were no greater than what the subjects experience in daily life as regular Facebook users, and so the study posed no more than 'minimal risk' to them."

Contrast that with Kashmir Hill, who writes: "While many users may already expect and be willing to have their behavior studied -- and while that may be warranted with 'research' being one of the 9,045 words in the data use policy -- they don't expect that Facebook will actively manipulate their environment in order to see how they react. That's a new level of experimentation, turning Facebook from a fishbowl into a petri dish, and it's why people are flipping out about this."

Although Meyer's point is a respectable one, I side with Hill. The experiment does strike me as running counter to the expectations of many users. People may know that Facebook manipulates the news feed, but they might not have realized that this manipulation would be done to affect their mood.

One thing that is laudable for Facebook is that the study was publicly released. Too often, uses of data are kept secret and never revealed. And they are used solely for the company's own benefit rather than shared with others. I applaud Facebook's transparency here because so much other research is going on in a clandestine manner, and the public never receives the benefits of learning the results.

It would be wrong merely to attack Facebook, because then companies would learn that they would just get burned for revealing what they were doing. There would be less transparency. A more productive way forward is to examine the issue in a broader context, because it is not just Facebook that is engaging in these activities. The light needs to shine on everyone, and better rules and standards must be adopted to guide everyone using personal data.

Manipulation

Another issue involved in this story is manipulation. The Facebook experiment involved an attempt to shape people's moods. This struck many as creepy, an attempt to alter the way people feel.

But Tal Yarkoni, in a thoughtful post defending Facebook, argues that "it's not clear what the notion that Facebook users' experience is being 'manipulated' really even means, because the Facebook news feed is, and has always been, a completely contrived environment." Yarkoni points out that the "items you get to see are determined by a complex and ever-changing algorithm." Yarkoni contends that many companies are "constantly conducting large controlled experiments on user behavior," and these experiments are often conducted "with the explicit goal of helping to increase revenue."

Yarkoni goes on to argue that "there's nothing intrinsically evil about the idea that large corporations might be trying to manipulate your experience and behavior. Everybody you interact with-including every one of your friends, family, and colleagues-is constantly trying to manipulate your behavior in various ways."

All the things that Yarkoni says above are true, yet there are issues here of significant concern. In a provocative forthcoming paper, Digital Market Manipulation, 82 George Washington Law Review (forthcoming 2014), Ryan Calo argues that companies "will increasingly be able to trigger irrationality or vulnerability in consumers -- leading to actual and perceived harms that challenge the limits of consumer protection law, but which regulators can scarcely ignore."

As I observed in my Privacy Self-Management article,"At this point in time, companies, politicians, and others seeking to influence choices are only beginning to tap into the insights from social science literature, and they primarily still use rather anecdotal and unscientific means of persuasion. When those seeking to shape decisions hone their techniques based on these social science insights (ironically enabled by access to ever-increasing amounts of data about individuals and their behavior), people's choices may be more controlled than ever before (and also ironically, such choices can be structured to make people believe that they are in control)."

Yarkoni is right that manipulation goes on all the time, but Calo is right that manipulation will be a growing problem in the future because the ability to manipulate will improve. With the use of research into human behavior, those seeking to manipulate will be able to do so much more potently. Calo documents in a compelling manner the power of new and developing techniques of manipulation. Manipulation will no longer be what we see on Mad Men, an attempt to induce feelings and action based on Donald Draper's gut intuitions and knack for creative persuasion. Manipulation will be the result of extensive empirical studies and crunching of data. It will be more systematic, more scientific, and much more potent and potentially dangerous.

Calo's article is an important step towards addressing these difficult issues. There isn't an easy fix. But we should try to address the problem than continue to ignore it. As Calo says: "Doing nothing will continue to widen the trust gap between consumers and firms and, depending on your perspective, could do irreparable harm to consumer and the marketplace."

James Grimmelman notes that: "The study itself is not the problem; the problem is our astonishingly low standards for Facebook and other digital manipulators."

Conclusion

The Facebook experiment should thus be a wake-up call that there are some very challenging issues ahead for privacy that we must think more deeply about. Facebook is in the spotlight, but move the light over just a little, and you'll see many others.

And if you're interested in exploring these issues further, I recommend that you read Ryan Calo's article, Digital Market Manipulation.

This post first appeared on LinkedIn.

Daniel J. Solove is the John Marshall Harlan Research Professor of Law at George Washington University Law School, the founder of TeachPrivacy, a privacy/data security training company, and a Senior Policy Advisor at Hogan Lovells. He is the author of nine books including Understanding Privacy and more than 50 articles.