As a growing number of wrongful convictions in the U.S. judicial system are brought to light, psychologists are exploring what caused these miscarriages of justice and how they can be prevented. Over the years, for example, researchers have identified some of the reasons why eyewitnesses make mistakes and why suspects confess to crimes they did not commit. Consequently, reforms aimed at minimizing the incidence of misidentifications and false confessions are slowly taking hold.

A third factor has only recently begun to receive attention -- namely, the judgments of forensic science examiners. The forensic sciences encompass a broad range of disciplines whose 'scientific' basis was recently scrutinized and deemed inadequate by the National Academy of Sciences (NAS), which noted that forensic errors can and do lead to the convictions of innocent people. More than half of the 300+ cases in the Innocence Project's database of DNA exonerations included unreliable or improper forensic science evidence, and last July, the U.S. Department of Justice (DOJ) launched a review of over 21,000 FBI cases to determine if flawed forensic work has contributed to other wrongful convictions.

Surely some of these errors are attributable to deliberate fraud or negligence, as illustrated by recent scandals in Boston and New York City crime laboratories. However, in last month's issue of the Journal of Applied Research in Memory and Cognition (JARMAC), my co-authors Saul Kassin, Itiel Dror, and I argue that unconscious psychological influences can lead even experienced, careful, and well-intentioned forensic examiners to commit costly mistakes.

Decades of psychological research has consistently shown that humans exhibit "confirmation biases" in decision-making; that is to say, we naturally gather, interpret, and create new evidence in biased ways that support what we already believe. Along these same lines, we propose that forensic analysts who possess an a priori belief in a suspect's guilt are vulnerable to forensic confirmation biases that render them more likely to see evidence as incriminating -- even when it is not.

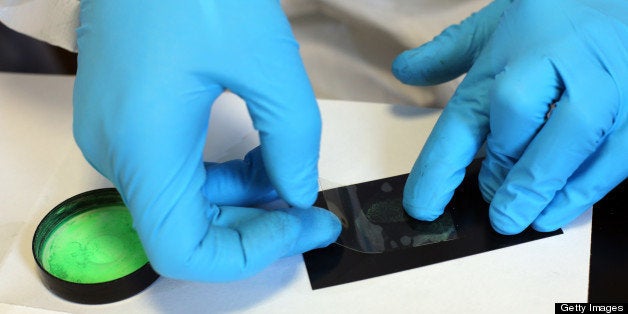

A growing body of real-world cases and research studies demonstrate this point. For example, following the 2004 Madrid train bombings, three FBI fingerprint experts confidently concluded that a latent print taken from a bag containing detonating devices belonged to Brandon Mayfield, an American Muslim attorney in Oregon. Mayfield spent 17 days in FBI custody before Spanish authorities identified the true perpetrator. At that point, the DOJ's Office of the Inspector General ordered a full review of the case and ultimately implicated "confirmation bias" as contributing to Mayfield's misidentification, adding that a "loss of objectivity" led examiners to see "similarities... that were not in fact present."

Inspired by this case, Itiel Dror and his colleagues cleverly tested whether forensic examiners can be biased by their expectations. They presented five fingerprint experts with prints that, unbeknownst to them, they had deemed a "match" earlier in their career. When told that these prints were taken from the Mayfield case, four of the five experts now concluded that they did not match, suggesting that their judgments were sensitive to context. In a follow-up study, Dror and David Charlton gave experts case files containing prints that they had (unknowingly) examined before, along with additional evidence that implied guilt (i.e., a confession) or innocence (i.e., an iron-clad alibi). When given this new information, experts changed 17 percent of their own prior judgments.

Knowledge of other evidence against a suspect biases judgments in other domains as well. For example, it affects whether investigators see their face as similar to a computer-generated composite, whether eyewitnesses identify them from a lineup, whether polygraph examiners score their charts as deceptive, whether DNA experts conclude that a complex DNA sample implicates them, and whether jurors perceive auditory and handwriting evidence as incriminating.

Latent fingerprint analysis is one of the more well-established domains of forensic science -- it has been routinely admitted in courts for nearly a century and studies find that experts generally produce highly accurate judgments -- yet these human examiners are vulnerable to the same confirmation biases that plague us all. It logically follows that other, less 'scientific' domains of forensic science that involve visual comparisons (e.g., handwriting identification, microscopic hair and fiber analysis, forensic odontology, etc.) could be similarly hampered by confirmation bias.

In a sense, these types of errors are more difficult to correct than the types of misconduct cases recently discovered in Boston and New York, insofar as they are a natural byproduct of the way people think and cannot simply be 'willed away.' Is there a way to prevent the problem? The obvious first step is to insulate forensic examiners from extraneous information that could create biasing expectations; indeed, at least one Australian crime lab has already modified their procedures in this way to protect against bias. In many U.S. labs, however, it remains commonplace for examiners to be told of other evidence against the suspect prior to testing the forensic evidence, and practitioners have opposed changing this practice on both economic and pragmatic grounds.

We maintain that such a policy would only strengthen the power of forensic evidence. Where examiners receive potentially biasing case information, mounting research raises concerns as to whether this information could have tainted their analysis, which undermines our confidence in their judgment. But, where examiners receive only information that is needed, we can be more certain that their conclusions are the sole product of their analysis -- thereby allowing the evidence to "speak for itself."