Should we be shocked by scientific fraud, or is such misbehavior actually rather common?

It might not be something to celebrate, but scientists who commit research fraud are following in a grand tradition. The first recorded fraud in science took place in the second century AD. The Egyptian mathematician and astronomer Ptolemy manipulated data to support his astronomical models. When observations didn't fit with his ideas, he simply cast them aside.

The world's most celebrated scientist, Isaac Newton, also bent the rules. Whenever he produced a new edition of his masterwork, The Principia, Newton tweaked his calculations so they would look accurate compared to the data of the day.

Newton did this to claim superiority over his scientific rivals. The falsified calculations, biographer Simon Westfall says, were "a cloud of exquisitely powdered fudge factor blown in the eyes of his scientific opponents".

Anyone familiar with Newton's prickly and difficult personality would probably expect such behavior. More surprising is the fraud that Galileo carried out on Pope Urban VIII.

We think of Galileo as a martyr to the truth. His suggestion that the Earth moved around the sun earned him years of house arrest. The Catholic Church banned his celebrated book Dialogue Concerning the Two Chief World Systems for two centuries. It is rarely mentioned that the last chapter of this book, published in 1632, contained a blatant and deliberate fraud.

Galileo wrote the book because Pope Urban was genuinely interested to hear why the Earth might move around the sun. Galileo believed the tides offered the most convincing demonstration. Unfortunately, he couldn't make his proof work.

Galileo constructed elaborate mathematical arguments showing that the motion of Earth around the sun, combined with its daily spin on its axis, would cause a sloshing of the oceans -- the tides. But the math gave only one high tide per day, and always at the same time.

Galileo lived in Venice. He and everyone around him knew that there were two high tides a day, and that they happened at ever-changing times.

What's more, Johannes Kepler had demonstrated three decades earlier that the moon was involved in creating tides. When people pointed this out, Galileo blustered his way through. He angrily accused his detractors of being silly, childish thinkers and propagating "useless fiction."

When Einstein came to write a preface for a modern edition of Galileo's book, he said Galileo's fraud was acceptable because it was well-motivated. We can gloss over Galileo's questionable methods, Einstein said, because he had eventually been proved right.

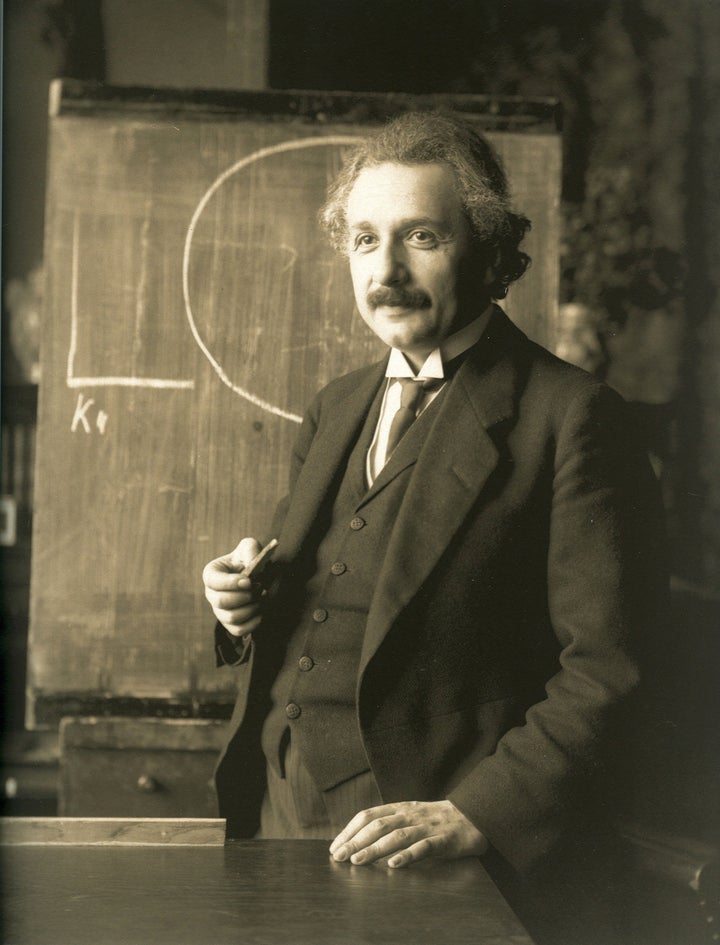

In an age where we demand high ethical standards from our scientists, that sounds somewhat scandalous. But Einstein knew just how difficult it can be to pin down the "correct" scientific results.

Though he claimed ownership of the world's most famous equation, E=mc, Einstein never managed to prove it.

In all, Einstein made eight attempts at a proof, and all of them contained errors, bogus assumptions or devious sleights of hand designed to cover up the proof's shortcomings.

In the long run, it didn't matter. Physicists knew the equation was correct because, to Einstein's annoyance, other scientists had already created flawless proofs.

On another occasion, though, Einstein committed a fraud where he got things badly wrong.

Einstein had been conducting experiments to find out the cause of magnetism in iron. He had developed a theory, and the experiments, he said, matched it almost perfectly. The result would "silence any doubt about the correctness of the theory," he told the German Physical Society in 1915. What he didn't tell them was that he had also done experiments where the results were wildly different.

A few years later it emerged that Einstein's theory about magnetism was incorrect. Einstein only "proved" it because he had cherry-picked the results that fit his preconceptions.

This is part of what scientists call "normal misbehavior". Roughly one-third of scientists admit to having committed some kind of fraud in the last three years. Experiments rarely go perfectly, and scientists often have to use their intuition to separate out useful results from results that arise from anomalies or difficulties with their apparatus.

That's what the astronomer Arthur Eddington did in 1919 when he cherry-picked among his observations of an eclipse. The idea was to prove Einstein's general theory of relativity. However, Eddington's analysis of the data was questionable enough for the Nobel Prize committee to exclude relativity from Einstein's 1921 Nobel Prize for physics. Assessing the merits of relativity was impossible until it was "confirmed in the future," the committee said.

It works the other way, too: sometimes "confirmed" experimental results have to be swept aside. In their hunt for the structure of DNA, Francis Crick and James Watson stumbled for months because other people's published results, involving the angles between some chemical bonds, were incorrect. Crick said that the experience taught him "not to place too much reliance on any single piece of experimental evidence."

That, too, is acceptable in science. A scientist's job is not just to make a discovery, it's also to question, prod and poke at the discoveries of others.

Scientists know their colleagues will often take shortcuts. To be first to the truth often requires it. Crick once moaned that his colleague Rosalind Franklin was "too cautious" and "too determined to be scientifically sound and to avoid shortcuts."

The trick, though, is to be right. Anyone discovered following a false trail will find their colleagues only too eager to publicly expose the mistake.

This confrontational system causes a few fights and often exposes dirty tricks and shady short cuts. Ultimately, though, it gets us to the right answer. Science in progress might not always be a pretty sight, but that's exactly what makes it so reliable in the end.

Michael Brooks is the author of the bestselling '13 Things that Don't Make Sense' and 'Free Radicals: The Secret Anarchy of Science,' out this month from The Overlook Press. He holds a PhD in quantum physics and has lectured at New York University, the American Museum of Natural History, and Cambridge University. He has written for The Huffington Post, Playboy, The Philadelphia Inquirer, The Independent, The Guardian, and the Observer. He is currently a consultant at New Scientist and writes a weekly column for the New Statesman.