College Rankings

Time to commence a predictable cycle of these party school rankings.

Based on cost of attendance, graduation rates and how well grads do in the workplace.

Learn which schools topped the rankings in law, business, nursing and more.

A ranking of schools by how many disciplinary actions they hand out per capita.

WHAT'S HAPPENING

The campus was the site of the first mass shooting on an American college campus.

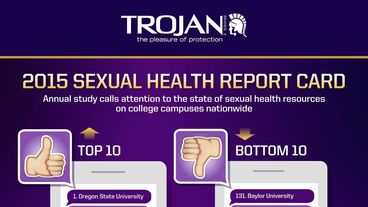

The annual ranking finds most campuses are making significant improvements.

The U.S. is down to just 63 out of the top 200.

The website Rate My Professors used student review data to grade the schools.

This is when you need a red Solo cup emoji.

Most Brown University students would be willing to have sex on the first date.