On The Atlantic Wire Gabriel Snyder gives what we'd call a combinatorial analysis of the presidential election. I like the analysis not for what it says about the possible outcome but because it illustrates an influential idea in computer science, called computational thinking: formulating a problem so that it can be solved by an information-processing agent. Here's how it works in this situation.

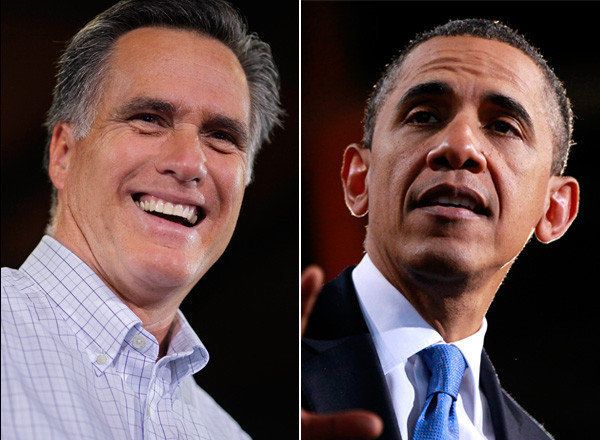

It's generally accepted that across most of the country, there's little doubt about which states' electoral votes (EV) will go to Obama and which will go to Romney. There's some uncertainty, though, about where the 110 total EV across nine swing states will go. Here's the main question that Snyder poses: How many different combinations of swing states lead to one of the candidates winning the election? Given what we expect from the non-swing states, Obama needs 33 EV or more from the swing states to win; Romney needs 77 or more.

Let's start with a list of the nine swing states, in some order (any order will do):

[FL OH NC VA WI CO NV IA NH]

And here are the electoral votes for those states:

[29 18 15 13 10 9 6 6 4]

Snyder is interested in all the possible ways that some subset of those values sum to a given value or greater (33 for Obama, or 77 for Romney). Here's one way to figure this out. Suppose Romney ends up winning Florida and Ohio but none of the rest of the swing states. We encode this outcome following the ordering of the states above, writing a 1 for winning a state and a 0 otherwise:

[1 1 0 0 0 0 0 0 0], or, more concisely, 110000000.

An encoding of Romney winning none of the swing states would be 000000000, or Romney winning all of them 111111111. We can think of our encoding as more than just patterns of ones and zeros; we've turned each outcome into a binary number. And what can we do with numbers, whether they're in binary or the more familiar decimal form? We can count.

0: 000000000

1: 000000001

2: 000000010

3: 000000011

4: 000000100

...

511: 111111111

We can write down a simple algorithm that counts from 0 to 511, and for each number we look at nine places in its binary representation. Each of those places represents a different state and thus a different EV value. If the binary representation has a 1 in a given place, we add the corresponding EV to a running total. When we're finished with the nine places, we check whether the running total meets or exceeds the candidate's target value. That's a combination of swing states that works for the candidate.

Using this algorithm or something close to it, Snyder figures that of the 512 possible combinations, 431 result in Obama winning the election and 76 give Romney the win (five additional ties would go to Romney).

Does this help us predict the results of the election? No. There are too many assumptions built into the analysis. But it does tell us something about the general landscape of the election. For example, Florida is typically described as a must-win state for Romney. With a change to our algorithm, we can see why. Instead of just testing whether a combination of swing states adds up to 77 EV or more, we count the number of times each state is a part of a winning combination. We find that there's just one combination in which Romney loses Florida and yet passes his EV threshold (by winning all the other swing states). It's certainly possible to carry out a more detailed and precise analysis (and Nate Silver does exactly that), but there's also value in simple approaches that can quickly tell us if we're looking in the right direction.

Why do I call this process "computational thinking"? Consider what we've done. We've taken a description of a situation and encoded it in a way that's easy for a computer to deal with, specifically a binary representation. We've laid out an algorithm that carries out a simple computation repeatedly. We've swapped out one simple computation for another, to get a different result we're interested in; we're relying on the modularity of the algorithm. All these are core concepts in computer science.

We could go further. For example, the algorithm I've laid out is brute force. For nine states we have 0 ... 511 or 512 combinations to consider, 2 to the 9th power. Adding a 10th state, we'd have 1,024 combinations, 2 to the 10th power. One additional item in the input to the algorithm doubles the amount of work it has to do. Suppose we had the idea of applying the same algorithm at the level of voting districts. But in Ohio alone there are more than 2,000 voting districts; the number of combinations we'd have to consider is enormous. To use a standard comparison, that number is larger than the number of atoms in the universe -- more than the number of atoms in 17 universes. So let's not do that.

Many computer scientists, including me, believe that computational thinking can give us a useful perspective on the world around us. Our world is full of information, and we can take advantage of it if we have good conceptual tools.