Over the remainder of the election campaign, HuffPost will be presenting graphical summaries of the modeling I've been doing behind-the-scenes for them over the last few months. Some additional background on how it all works seems warranted.

The goal is to summarize the "state of play" in states where we have even a little polling, along with the national trend in voting intentions. Note right away that this isn't a forecast of what will happen in November on Election Day; but rather a synthesis of available polling -- the web site is called Pollster, not Forecaster.

That said, it is important to note that there is a lot more going on than just poll averaging.

Every couple of hours, my computer programs check to see if there are any new polls in the Pollster database. We're at a point in the campaign now where tempo of polling is ramping up and polls are being published throughout the working day. But even on a quiet day, survey houses like Rasmussen or Gallup are updating their rolling, national tracking polls. So at least once a day (but usually more often than that), my computer programs find new polls to integrate into the estimate of where each state is.

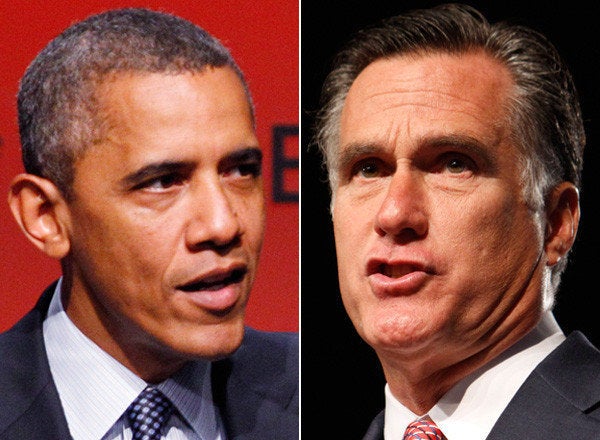

The goal is to form today's best estimate of the national and state-by-state levels of support for Obama and Romney. If we literally had no new polling on a given day, then today's best guess is simply yesterday's estimate. But the more interesting case is when, say, we have new national level polls, and a few state-level polls. How do we use that information to make estimates for all of the states we're tracking, even for states without new polling data?

This is where the modeling comes in. My model assumes that states (and the national track) move together, but in clusters. That is, some states follow other states more closely than others. Further, some states follow the national trend more closely than others. I rely on historical election returns to help assign states to clusters, and for initial guesses as to the strength of the clustering; in exploiting the historical data I upweight recent presidential elections relative to less recent ones.

There is a big geographic component to the clustering (e.g., New England, the Plains, the deep South, the Pacific West). Since the national outcome is simply the sum of what happens in the states, all states track the national outcome to some extent; that said, in recent U.S. presidential elections some states track the national swing more closely than other states. For example, Indiana, New York, Arizona, New Mexico and (of course) Ohio and Michigan have tracked the national swing much more closely than, say, Mississippi and South Dakota. Accordingly, polls in, say, Ohio and Michigan tend to more informative about the national trend (and vice versa) than polls from the deep South or the Plains.

In turn, this means that even though we may not have polls in every state on every day, we've got a plausible (if imprecise) model of movement in one state that might be related to movements in another state, and a model that links up state and national level changes in the vote. We'd always like more data than less, but the model lets us produce estimates day by day, even without a steady stream of polling data from each state, "borrowing strength" from state with polling data (or the national level) through the clustering.

The model also deals with the fact that different polls have different sample sizes, and so not all polls contribute equally to the model's estimates. The model also corrects for "house effects" that I talked about in an earlier post, the tendency for some survey houses to produce estimates that are systematically higher or lower for one candidate than other pollsters.

These house effect corrections are vital, letting us deal with the fact that some pollsters contribute many more polls than others; e.g., Rasmussen contributes 150 of the 720 presidential election polls in Pollster's collection of 2012 Obama-Romney head-to-head polls, Public Policy Polling contributes 103 polls, with another 109 survey houses contributing the remaining 467 polls. Without the house effect corrections, the model's estimate would be overwhelmed by any bias contained in the estimates produced these more prolific pollsters. In future posts I'll dig into the specific estimates of house effects, with a specific focus on likely voter versus registered voter filters.

In addition, I also aggressively down-weight polls that come from "one-off" pollsters (appearing just once in the Pollster data) and/or polls that are radically at odds with the rest of the polls for a given state.

So, for each day, for about 38 states (states where we have at least one poll in 2012) -- and for the national level -- I obtain an estimate of the state of play. The estimate is only that -- an estimate -- and so comes with some uncertainty. The uncertainty comes from multiple sources: (1) sampling error in the polls themselves; (2) uncertainty about the house effect corrections; (3) uncertainty about how quickly vote intentions are changing; (4) uncertainty about the strength of the correlations between and within clusters of states and the national level.

I take these sources of uncertainty into account when producing state-by-state probabilities of winning and the Electoral College summary. In states with less polling, we have more uncertainty about the state of play. Happily, these are generally states where the outcome isn't in much doubt (e.g., Utah).

Now that we're well and truly past the conventions, we're going to see a lot more state-level polling, particularly in "battlegrounds" like Ohio, Virginia, Pennsylvania and Florida. Already these states dominate the state-level polling in the Pollster data base: e.g., 41 polls from Florida, 38 from Ohio, 15 from New York, 13 from California, 1 from Utah. More data from more states will mean less uncertainty in the state-by-state estimates and less reliance on the model and its underlying assumptions.

In the meantime, please enjoy the estimates and graphs I'm producing for Pollster.