Last week, researchers who work for Facebook released a study titled "Exposure to ideologically diverse news and opinion on Facebook." It was about a crucial topic: Does the increasing role of algorithms of personalization and "engagement" on online platforms end up filtering out viewpoints that are different than our own?

The study, published in Science, generated a flurry of media coverage with headlines such as "Facebook Echo Chamber Isn't Facebook's Fault, Says Facebook" (Wired), "Facebook says its algorithms aren't responsible for your online echo chamber" (Verge), "Facebook study shows that you -- not an algorithm -- are your own worst enemy" (Mashable) and so on.

So, can you stop worrying about the role of algorithms and personalization in creating echo chambers online? The shortest version is this: Absolutely not. That is actually what the study showed. But before I explain, there are other lessons here worth considering.

First, we should be skeptical of uncritical and uninformed media coverage as it can often end up repeating what powerful companies want you to hear. Even as technology plays an increasingly important role in our lives, we lack critical, smart and informed coverage -- with a few exceptions. We get more critical coverage of Apple's newest watch then we do on important topics like on how algorithms which help shape our online experience.

Second, it's really past time for the press -- and academics -- to stop being blinded by "large N" studies (this one had 10 million subjects) and remembering the basics of social science research. Even with 10 million subjects, your findings could be generally inapplicable. It all depends on who those 10 million were and how they were selected.

Third, and perhaps most important, Facebook is on a kick to declare that the news feed algorithm it creates, controls and changes all the time is some sort of independent force of nature or something that is merely responsive to you, without any other value embedded in its design. But in reality, the algorithm is a crucial part of Facebook's business model.

Last month at a journalism conference, Facebook's director of news and media partnerships said, "It's not that we control news feed, you control news feed by what you tell us that you're interested in." Before that, the 26-year-old engineer who heads the team that designs the news feed algorithm said, "We don't want to have editorial judgment over the content that's in your feed... You're the best decider for the things that you care about." The reality is far from that, of course, since Facebook decides what signals it allows (Can you "dislike" anything on Facebook? Nope), which ones it takes into account and how, and what it ultimately shows its 1.4 billion users.

This is not just an academic worry for me. As an immigrant, Facebook is how I keep in touch with a far-flung network of friends and family, some of whom live in severely repressive states where Facebook is among the few spaces outside of government control. In my own country, Turkey, social media outlets are routinely shut down or threatened with being shut down because they challenge governmental control over the censored mass media sphere.

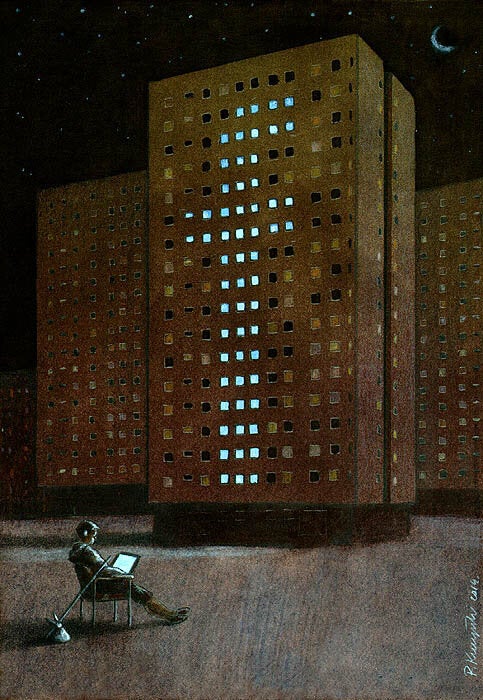

And for many people I encounter, Facebook is almost the whole Internet -- it's all they know how to use and it's where their social networks are. Due to these network effects, Facebook is without an effective competitor so mere market forces do not push it. This semester, I asked my students if they ever watch the evening news on television. I might have as well asked them how they refilled the ink bottles for their quills. Some had never watched it, not even once.

In August 2014, I watched it all online as Facebook's news feed algorithm flooded my timeline with the Ice Bucket Challenge, a worthwhile charity drive for ALS research, while also algorithmically hiding updates from the growing Ferguson protests. Many of my friends were furiously posting about Ferguson on Facebook and on Twitter, but I only saw it on Twitter. Without Twitter to get around it, Facebook's news feed might have algorithmically buried the beginning of what has become a nationwide movement focusing on race, poverty and policing.

Many people are not even aware of the degree to which these platforms are algorithmically curated for them. A recent study found that 62 percent of the participants in one study had no idea that their Facebook feed was not everything that their friends posted. Some had been upset that their friends were ignoring them when, in fact, it was the algorithm that had hidden their updates. And companies with money can pay-to-play and force themselves into your feed.

So, what did the study find? First, let's talk sample. The researchers only studied people who had declared their political ideology (liberal or conservative), who interacted with hard news items on Facebook and logged on regularly -- only 4 percent of the population they had available. The details of this crucial sampling issue were buried in the appendix and erased from the conclusion, which made sweeping statements. This lead a prominent researcher from Northwestern University who has published extensively on research methods to exclaim, "I thought Science was a serious peer-reviewed paper."

To trained social scientists and anyone with common sense, it is immediately obvious that a politically engaged, active 4 percent of the Facebook population is likely to be different than ordinary Facebook users. The press coverage missed this -- even the New York Times, which only added that all important sampling qualification and changed its sweeping title after I and others loudly objected on Twitter.

And here's more: The study found that the algorithm suppresses the diversity of the content you see in your feed by occasionally hiding items that you may disagree with and letting through the ones you are likely to agree with. The effect wasn't all or nothing: for self-identified liberals, one in 13 diverse news stories were removed, for example. Overall, this confirms what many of us had suspected: that the Facebook algorithm is biased towards producing agreement, not dissent. This is an important finding -- one you may have completely missed in the press coverage about how it's all your fault.

Also buried in an appendix was a crucial finding about "click-through-rates" on Facebook. The higher an item is in the news feed, a lot more it is likely to be clicked on -- a combined effect of placement and news feed guessing what you would click on. The chart showed a precipitous drop on clicks on lower-ranked items. This finding actually highlights how crucial the algorithm is. But it was not really discussed in the main paper.

The researchers also found that people are somewhat less likely to click on content that challenges them: 6 percent less clicks for self-identified liberals and 17 percent less clicks for conservatives. And then, confusingly, they compared this effect -- something well-known and widely understood so not really contested -- to the effect of Facebook's algorithm, as if they were independently studying two natural phenomenon, rather than their own company's ever-changing product (and even though the effect sizes were fairly similar rather than one dwarfing the other).

Imagine a washing machine that destroys some of the items of clothing you put in it. Now imagine researchers employed by the company publishing a study that gives you a number -- one in thirteen clothing items are destroyed by machines manufactured at a small plant which produces 4 percent of the devices the company produces. Now imagine the company also goes on to research how people donate their own clothes to charity, or don't take care of their clothes as well as they should. The headline wouldn't be: "It's your fault that your clothes are destroyed."

It's not that the study is useless. It is actually good to know this effect, and its magnitude, even for a 4 percent subsample, though I'd also like to know more about how Facebook decides what to publish. Journalists have asked the company how it allows and vets research to be published and have not been given a clear answer. But regardless of what Facebook does, we need the press to start taking technology seriously, as a beat that deserves scrutiny, understanding and depth. It's too important.