By Tanya Lewis, Staff Writer

Published: 07/12/2013 10:29 AM EDT on LiveScience

Technology for monitoring brain activity and eye movements might someday be used to detect when a person is falling asleep while driving, and alert them to prevent an accident.

Researchers in England are working to combine two high-tech tools — high-speed eye-tracking and electroencephalography (EEG) brain recording — to understand what's happening in the brain while the eyes are moving.

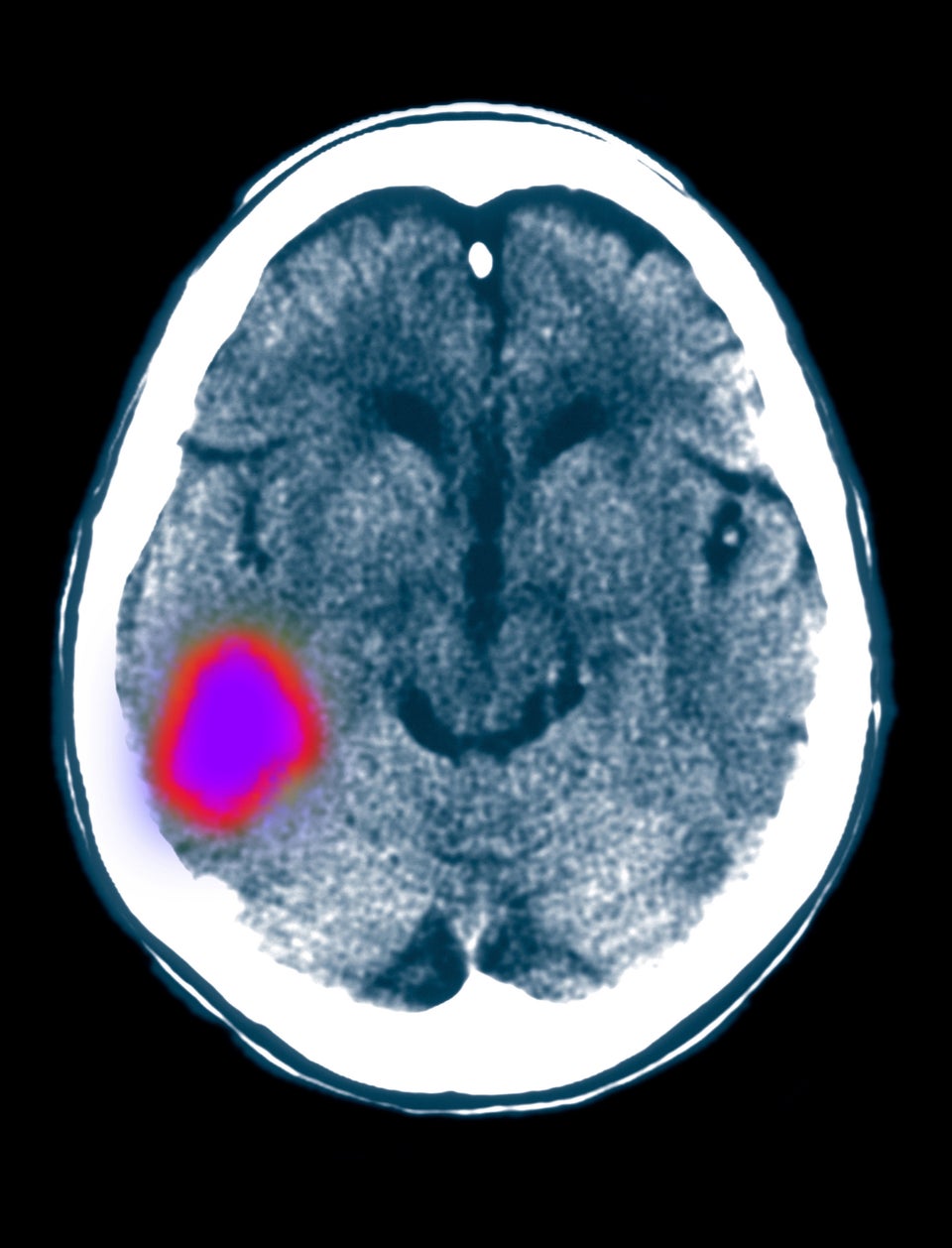

Electroencephalography involves placing sensors on a person's scalp to record the electrical murmurs of the brain's many neurons. The researchers measure EEG while simultaneously measuring eye movements. [5 Crazy Technologies That Are Revolutionizing Biotech]

"This is actually a very challenging task, because whenever we move our eyes, this introduces very large artifacts into the EEG signal," said neuroscientist Matias Ison of the University of Leicester in England, who is part of the research team.

Scientists could use this technology to detect the telltale signs of sleepiness in a driver, looking for characteristic patterns of brain activity and erratic patterns of eye movement that indicate a person is in the early phase of falling asleep. Indeed, systems that use eye-tracking to detect sleepy drivers have already been developed. But systems that monitor brain activity as well could greatly improve detection.

Fatigue is estimated to cause about 20 percent of motor accidents in the United Kingdom (where the research is being conducted), and plays a significant role in accidents in the United States and Australia, too, according to England's Department for Transportation.

The brain and eye-tracking technology could also be used to develop brain computer interfaces, which aim to restore movement or communication to people with serious movement disabilities, and in fact, some systems already use them. For example, people with amyotrophic lateral sclerosis (Lou Gehrig's disease), a disease that causes progressive degeneration of motor neurons, maintain good control of their eye movements until late stages of the illness, Ison said. Incorporating eye-tracking with EEG control could lead to improved brain computer interfaces, he said.

But at this point, Ison and colleagues are still trying to understand the basic mechanisms behind eye movements and brain activity. These mechanisms are important for, say, recognizing a friend in a crowd. People look at individual faces in sequence until they find a familiar one, but what is the brain doing? Until now, people studied this phenomenon by showing participants pictures and telling them not to move their eyes, because of the artifacts that movement would create in the brain signals.

"There was a large gap between the way in which we were able to study the brain and the way in which things occur" in reality, Ison told LiveScience. He said he hopes to bridge that gap. His current experiments involve having a person search for a face in a crowd using natural eye movements.

The first EEG was built more than 80 years ago, and scientists have been using it for research and clinical applications for the past 50 years, Ison said. Still, "we are really only starting to understand how the brain works [during] natural viewing under real-life conditions."

Follow Tanya Lewis on Twitter and Google+. Follow us @livescience, Facebook & Google+. Original article on LiveScience.com.