Exactly 20 years -- and five presidential election seasons -- ago, at the end of August 1992, I was flying from London back to Boston, and at 41,000 feet had one of the most interesting ideas in my professional life. I was then a professor of mathematics and statistics at what is today called Bentley University (then Bentley College) and about to start teaching an advanced statistics course. What would happen, I asked myself, if instead of giving my class the usual boring examples, I would have them do a class project: use statistics to predict the results of the upcoming presidential election?

Professional polling organizations like Gallup have thousands of employees and bottomless pockets, but the hubris of youth told me that I knew statistics better than they did, and that with 25 students, 12 phone lines, and a budget (generously provided by the college) to make a mere 1,000 phone calls to voters around the country, I could call the election with high accuracy. We spent the semester learning about sampling methods, stratification, bias in surveys, and sampling distributions, all in preparation for our big event. Then, the night before the election, we stayed up late in our "operations center," each group of students manning a phone, armed with a state-of-the-art random sampling scheme we had developed throughout the course that would give every voter in the United States an equal chance of being selected for our representative sample. Over takeout pizza and countless cups of coffee, we ran a poll of 1,000 voters that perfectly spanned the entire United States, with all its regions, area codes, and phone exchanges proportionally represented in our sample. And we did it! We were able to call the results of the election to within half a percentage point for one of the three candidates who ran that year, Ross Perot, and within 1% and 1.5%, respectively, for the other two: Bill Clinton and George H.W. Bush. A good experimental design allowed us to do so well -- obtain a result of higher overall accuracy than that of Gallup -- and our predictions were reported in some newspapers and on radio programs.

The point is that, by 1992, telephones had become a viable way of conducting political polls. But it hasn't always been that way. Before 1936, an important magazine that no longer exists, the Literary Digest, had been able to call presidential elections so well that the New York Times would regularly report the Digest poll results on its front page during every election campaign. In 1936, Digest editors decided to outdo themselves, hoping to gain even more prestige for their magazine. They would collect a sample of unprecedented size: 10 million voters! (Of these, 2.4 million responded -- still a sample that is immensely large.) The hugeness of the sample, the researchers believed, would guarantee them a supreme accuracy. Unfortunately, they did not fully understand the concepts of randomness and bias. The Literary Digest was a conservative publication, and its readers tended to vote Republican. The Digest used its readership as one source of sampled voters, thus introducing a bias. But two more sources of bias existed -- and they are more interesting for us here -- one was automobile registration plates, and the other was telephone numbers!

Now, today we poll people using the phone all the time. But this was 76 years ago: People who had cars and/or phones tended to be wealthier, and wealthy people, as we know, tend to vote Republican. So the frame, the statistical base for the sampling, was highly flawed -- it had a built-in bias to the right -- and so even a fantastically large sample size of 2.4 million could not make that natural bias go away. (There is also the problem of non-response, the fact that of 10 million people, less than a quarter responded; but that is another issue. Incidentally, my students told the people they polled that their grade in the class depended on their answering the poll, so our non-response was close to zero!). Because their vaunted sample indicated that the Republican candidate, Kansas Governor Alfred Landon, would win the election, the magazine went boldly out with this prediction -- prominently reported on the front-page of the New York Times and other newspapers. History decided otherwise, and the Democratic candidate, Franklin Delano Roosevelt, won the election in a landslide. The Literary Digest soon closed its offices in disgrace, having lost both face and readership.

So why am I telling you this story? In 1936, using telephone numbers to generate a frame from which to collect a random sample was a prescription for disaster. By 1992, phones had become the most efficient way to generate good samples because they were easy to use (who wants to travel from town to town, knocking on doors?) and in the meantime phones had become ubiquitous and no longer exhibited a preference to be owned by richer people: so the built-in bias was almost completely gone. (I have to say "almost" because there are people with no phones, but they are very few and their phonelessness may not be as highly income-dependent as it would have been in 1936). Another interesting fact to note here is that sample sizes need not be very large. A well-designed survey, in which good probability sampling is carried out, may well contain as little as 1,000 voters and still give excellent information. (The statistical rule is that in a random sample of size n, the sampling error at 95% probability is roughly plus or minus one divided by the square root of n. Thus, for a sample of size 1,000, the sampling error at 95% probability is plus or minus about three percentage points.*)

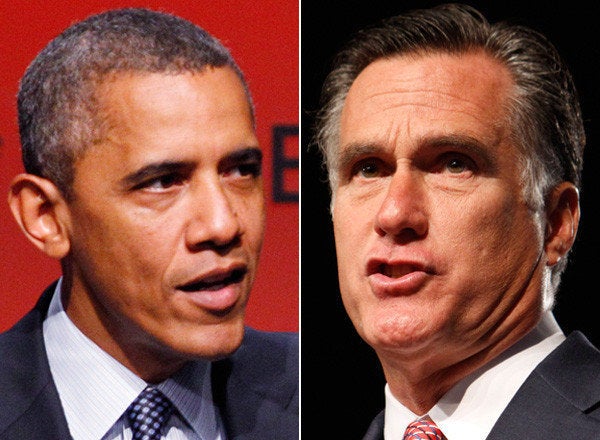

We have now moved to the next level of technology -- from phones to the Internet. And the question is: Have we progressed to the point at which using the Internet as a source of statistical information valid or not? And this is what brings me to Facebook. Both President Obama and Mitt Romney have their own Facebook pages, on which Facebook users can click the "like" button. I tracked the "likes" for Obama and Romney over three days, and here is what I found:

Barack Obama:

28,004,524 likes on August 28, 2012

A day later, after the GOP convention nominated Mitt Romney:

28,014,250 likes

And a day later:

28,023,918 likes

Mitt Romney:

5,332,105 likes on August 28, 2012

A day later, after the GOP convention nominated him:

5,440,065 likes

And a day later:

5,482,806 likes

First, from these data it appears that Obama is more liked than Romney by a ratio of five to one (although, as president for almost four years now, Obama has had more of a chance to collect "like" clicks). Then, we see some interesting trends here: Obama gained roughly 10,000 "likes" a day over two days. But Romney gained more than 100,000 "likes" the day he was formally nominated by the GOP at the convention in Tampa, and more than 40,000 "likes" the following day. It appears to me that following the two "like" counts, for Obama and for Romney, might be an interesting way of tracking something that may act as a proxy toward the popular vote on November 6 as we move through time -- something like a continuous kind of opinion poll. I italicize "might," and I am being very tentative here, because of the cautionary tale of the Literary Digest. I think that this might be an interesting pair of statistics to follow as they change through time precisely because I want to know whether or not there is a bias in using Facebook as an indication of who might win the election.

Is using Facebook like using phone numbers in 1936? -- meaning, is there an inherent bias here? The income element is probably not there: Facebook users are not, by any indication, either richer or poorer than nonusers. But with Facebook there may be two potential sources of bias: one is age, and the other is sensitivity to privacy issues. It appears that Facebook users may tend toward younger segments of the population, but I don't know whether this is a fact; and many non-users seem to have an obsession with "privacy." If age and sensitivity to privacy move voters either to the left or to the right, then using Facebook as an indicator of who is likely to win the November election, as measured at a given moment in time, is flawed. Otherwise, it may be an excellent, fast and easy rough source of some approximate kind of information about where the popular vote might be heading. Another question is whether Facebook users are quick enough to "unlike" a candidate once they change their minds about him. This may be an important hidden factor here.

How you can help: You could go to Facebook from time to time and in the "Comments" below paste what you see as the number of "likes" for each candidate. Then, after the election results are out, we will see whether this polling method worked or not (although one result will not necessarily tell us whether the method is biased or not -- the Literary Digest did get it right, by chance, several times before its fatal debacle.)

_____________________________

* Technical Note: With two candidates, the distribution of the sample proportion for one candidate is binomial, and its variance term is: p(1-p)/n, where p is the actual proportion of votes for the candidate. With a large sample, we approximate the binomial distribution with a normal distribution, so we use the multiplier 1.96 for 95% confidence. Once the election result is known, this can be done by calculating 1.96 times the square root of the expression above for the sampling error (or "margin of error") at 95% confidence. But before the results of the election are known, p is unknown, but an upper bound for the expression p(1-p) can be obtained (as a fun exercise in basic calculus) at p=0.5. An upper bound for the sampling error at 95% is then obtained with the 1.96 almost perfectly canceling with the square root of (0.5)x(0.5), leaving the rough upper-bound estimate of plus or minus one over the square root of n for the sampling error of the survey. With three candidates, the actual distribution is multinomial, rather than binomial, and the formulas are more complicated.