If a feature fails to load on a live site, does it make a sound?

It is HuffPost software engineer Anil Kadimisetty's job to make sure that it does.

Anil addressed over 40 Meetup attendees on the sixth floor of AOL headquarters in New York Wednesday night and explained how engineers can verify users are experiencing their products the way they should.

Anil, who came to HuffPost five months ago from Squarespace, has been working in test automation for almost 10 years. He described the "manual boring stuff" that used to be necessary to test software, and the audience audibly commiserated.

Automated tests act like a "proxy user," executing the functions of a web application like a human customer would. The tests result in a list of errors; specific places where the code failed and screenshots of the browser at the time of the error. Software engineers can then fix these errors before a real customer finds them.

The Huffington Post website might seem like an impossible product for testers.

Anil, an engineer for HuffPost Live, performs tests on the HuffPost Live Product server, but also on a controlled Beta server (a beta site is one with the same layout and features as a live site, but with dummy content, and great for experimenting with new code).

But even on a beta site, it's still very difficult to test dynamic elements, particularly if images and flash videos are involved.

As an engineer for Huffpost Live, Anil found a temporary way around this problem. He runs text comparisons, not image comparisons. Image comparisons are incredibly tedious and involve setting coordinate constraints on where the image is on the screen, which differs depending on what device is being used to view the image (a waste of bandwidth and a headache to write about). Instead of checking to see if the correct image is appearing, Anil's tests looks for titles and descriptions associated with the image.

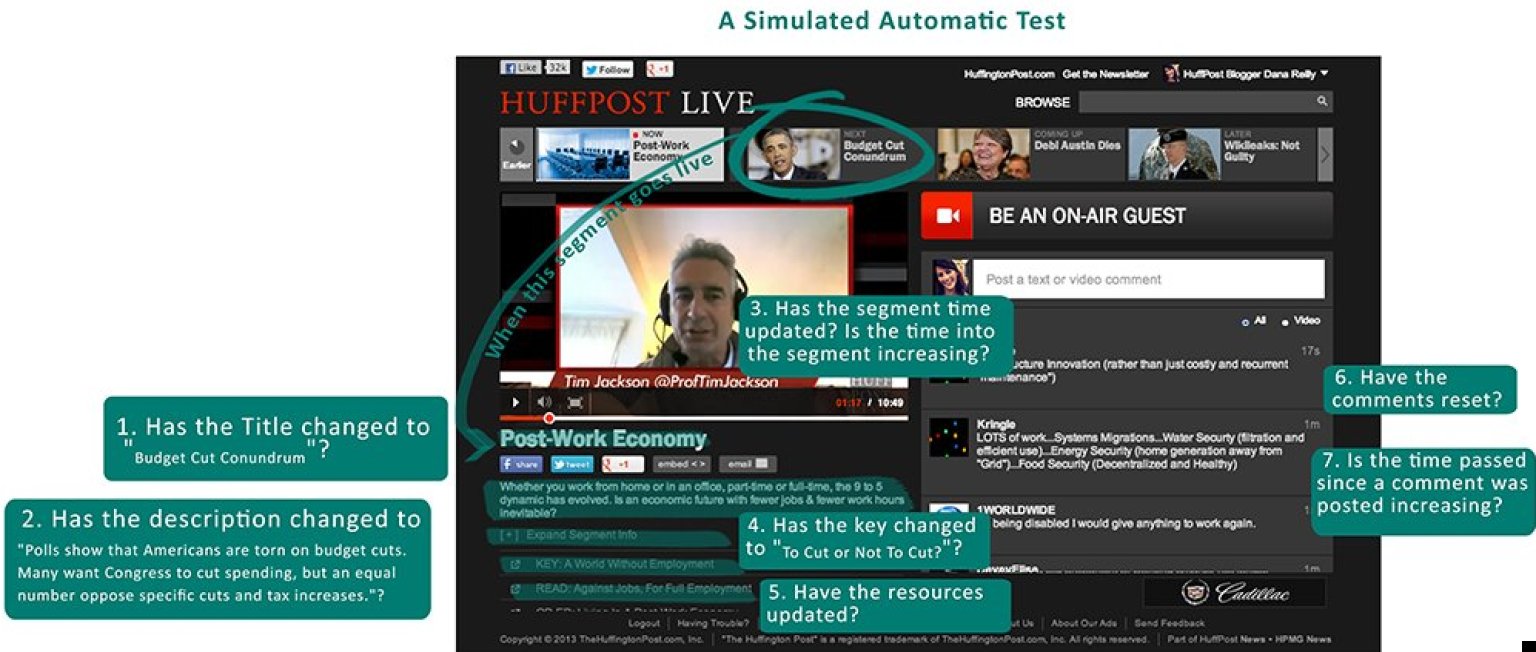

Below is an illustration of the kind of questions a test would run through to make sure a video segment (in this case "Budget Cut Conundrum") has transitioned successfully on the air.

Simultaneously, other tests perform a search in the search box (Anil uses the test word "Obama" because there should always be new results), write a comment in the comments section and browse the archives. All of this is executed by Saucelabs, who have the hardware to run all of these tests.

This method is also a waste of power.

"Right now, there is currently no one framework that properly addresses our kind of content," he said.

What Anil proposed at the Meetup is an integration of two frameworks: Selenium and Siluki. Selenium is now an industry standard for automating browsers, while Sikuli is a newcomer and is still being developed out of the University of Colorado Boulder.

Here is what each script framework brings to the table:

Sikuli can spot changes to

- Drag and drop from desktop to the website

Selenium can spot problems with everything else.

To know with 100% certainty that the correct video is playing on Live or the right photo appears on the front page next to it's corresponding ALL CAPS HEADLINE in the splash, we need to use Sikuli's image based framework. To know our articles are loading and our comments are populating, we should keep using Selenium.

Anil is close to implementing a hybrid framework to work for all of the HuffPost Media Group sites.

"The way I think automated tests should be: check out code, run maven test. Two steps," he said. "All basic actions are abstracted and reused."

Translation: the tools we use should produce code flexible enough to deal with the ever-evolving products they test.

And where better to test code against fast-changing, multi-platform content?

Anil's slides from the Meetup are available here on his personal blog.

Our 2024 Coverage Needs You

It's Another Trump-Biden Showdown — And We Need Your Help

The Future Of Democracy Is At Stake

Our 2024 Coverage Needs You

Your Loyalty Means The World To Us

As Americans head to the polls in 2024, the very future of our country is at stake. At HuffPost, we believe that a free press is critical to creating well-informed voters. That's why our journalism is free for everyone, even though other newsrooms retreat behind expensive paywalls.

Our journalists will continue to cover the twists and turns during this historic presidential election. With your help, we'll bring you hard-hitting investigations, well-researched analysis and timely takes you can't find elsewhere. Reporting in this current political climate is a responsibility we do not take lightly, and we thank you for your support.

Contribute as little as $2 to keep our news free for all.

Can't afford to donate? Support HuffPost by creating a free account and log in while you read.

The 2024 election is heating up, and women's rights, health care, voting rights, and the very future of democracy are all at stake. Donald Trump will face Joe Biden in the most consequential vote of our time. And HuffPost will be there, covering every twist and turn. America's future hangs in the balance. Would you consider contributing to support our journalism and keep it free for all during this critical season?

HuffPost believes news should be accessible to everyone, regardless of their ability to pay for it. We rely on readers like you to help fund our work. Any contribution you can make — even as little as $2 — goes directly toward supporting the impactful journalism that we will continue to produce this year. Thank you for being part of our story.

Can't afford to donate? Support HuffPost by creating a free account and log in while you read.

It's official: Donald Trump will face Joe Biden this fall in the presidential election. As we face the most consequential presidential election of our time, HuffPost is committed to bringing you up-to-date, accurate news about the 2024 race. While other outlets have retreated behind paywalls, you can trust our news will stay free.

But we can't do it without your help. Reader funding is one of the key ways we support our newsroom. Would you consider making a donation to help fund our news during this critical time? Your contributions are vital to supporting a free press.

Contribute as little as $2 to keep our journalism free and accessible to all.

Can't afford to donate? Support HuffPost by creating a free account and log in while you read.

As Americans head to the polls in 2024, the very future of our country is at stake. At HuffPost, we believe that a free press is critical to creating well-informed voters. That's why our journalism is free for everyone, even though other newsrooms retreat behind expensive paywalls.

Our journalists will continue to cover the twists and turns during this historic presidential election. With your help, we'll bring you hard-hitting investigations, well-researched analysis and timely takes you can't find elsewhere. Reporting in this current political climate is a responsibility we do not take lightly, and we thank you for your support.

Contribute as little as $2 to keep our news free for all.

Can't afford to donate? Support HuffPost by creating a free account and log in while you read.

Dear HuffPost Reader

Thank you for your past contribution to HuffPost. We are sincerely grateful for readers like you who help us ensure that we can keep our journalism free for everyone.

The stakes are high this year, and our 2024 coverage could use continued support. Would you consider becoming a regular HuffPost contributor?

Dear HuffPost Reader

Thank you for your past contribution to HuffPost. We are sincerely grateful for readers like you who help us ensure that we can keep our journalism free for everyone.

The stakes are high this year, and our 2024 coverage could use continued support. If circumstances have changed since you last contributed, we hope you'll consider contributing to HuffPost once more.

Already contributed? Log in to hide these messages.