“Does the machine understand?” is highlighted in any current generation consumer machine learning algorithm such as an online chatbot, a translator function in your mobile phone or home speech assistant is that the current natural language processing consists of largely transactional one-way responses. It is perhaps a similar question that Alan Turing raised in the introduction of his 1950 paper “Can machines think?”. Yet “understanding” something within a context could mean different things to the act of “thinking” both narrowly or more broadly depending on the problem or frame space.

For example if an algorithm response to the question “How far is it from the Milky Way to the Andromeda Galaxy?” the answer is “2.537 million light years”; of the next question is “how many stars does it have?”, the context referring the original question may be lost and unable to answer “1 trillion” and understand its reference to the galaxy in question or ask if the question related to our own galaxy of M31, a synonym of one of the galaxies. When more general inquires requiring general knowledge that may occur in a travel journey for example, this can quickly become open ended and unstructured, expanding the knowledge and context of that journey and the many possible permutations that require both knowledge, inferencing, estimating and understanding.

This argument goes that machine intelligence does not “understand” the meaning of the text or the question, just the syntax of the voice, text or image pattern recognition and the associativity to a library of neural network of trained response.

This is a central problem in today’s current artificial intelligence advances that are increasingly sophisticated in the speed and scale of data and program modelling but how much further forward are they in intelligent understanding and response?

Several thought experiments have been developed by scientists and philosophers in fields from human cognition, machine intelligence, sentient in the ability to perceive and feel things to the very basis of consciousness and the meaning of life. A thought experiment is designed to examine a physical problem that are beyond realistic real-world experiment but in artificial intelligence, these have been widely debated and challenged by human researchers and in physical experiments that have "beaten" these tests.

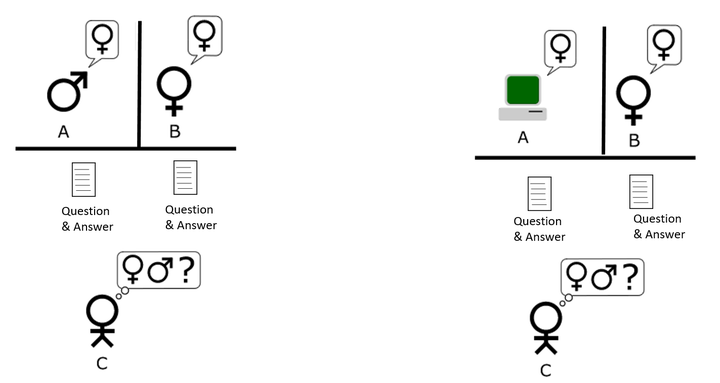

The imitation Test

Alan Turing, the computer scientist in 1950 developed the “imitation game” that is played with three people, a man (A), a woman (B), and an interrogator (C) who may be of either sex. The object of the game for the interrogator is to determine which of the other two is the man and which is the woman (1).

Eugene Goostman, a chatbot computer program simulating a 13-year-old boy in 2014 won a competition of five machines that were tested at an event organized by the University of Reading, UK at the Royal Society in central London to see if they could fool people into thinking they were humans during text-based conversations. "Eugene Goostman" managed to convince 33% of the judges that it was human, the university said (2). Not long before he died on 7 June 1954 Alan Turing, himself a Fellow of the Royal Society, predicted that in time this test would be passed by the year 2000 by being able to fool 30% of human judges in a five-minute test and that people would no longer consider the phrase "thinking machine" contradictory.

The weaknesses of the test for intelligent has been developed and explored by many research papers since from the ability to fool the test by deception and avoidance of answering to gamify the human interrogator. The other just issue was that imitation was limited to textual answers through and computer keyboard and screen and far form representing any sense of human intelligence and experience that includes multiple senses, touch, sight, feel, smell and so on.

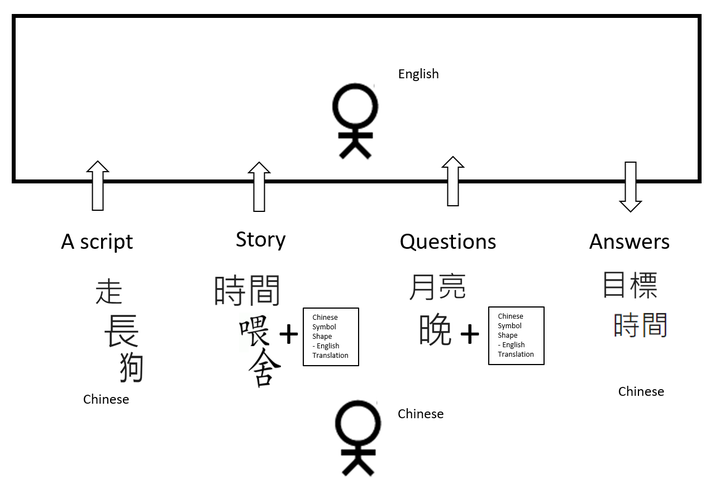

The Chinese Room Argument

A similar argument was developed by the Philosopher John Searle in 1980 in response to the Schank software program, developed by Roger Schank and colleagues at Yale University (3) that aimed to simulate the human ability to understand stories. The characteristic of this human story understand is the ability to answer questions about the story even for information not explicitly related to the story.

The thought experiment Searle conceived himself alone, locked in a room and given a large batch of Chinese writing. He has no understanding of what the Chinese symbols mean and after this first batch is given a second batch of Chinese script together with a set of rules for correlating the second batch with the first batch. The rules are in English, and so Searle, an English speaker can understand these rules as well as any other native speaker of English. The rules enable to identify the Chinese symbols by their shapes. A third batch of Chinese symbols are passed with English instructions again to correlate these to the previous two batches. Over time Searle theorizes that his answers back in Chinese symbols using the English shape translation becomes so good that it is indistinguishable from native Chinese speakers and nobody just looking at his answers can tell that he does not speak a word of Chinese (4).

Part of this test is that the first batch "a script," they call the second batch a "story. ' and they call the third batch "questions." This introduces the notion of some knowledge and framing of the questions and responses we will discuss in the next example.

John Searle’s Chinese Room Experiment illustrates the limitation of the Turing test and the argument here is the same in that a digital computer can mimic the text to symbol translation patterns but does not understand, in this case, Chinese. Computers merely use syntactic rules to manipulate symbol strings, but have no understanding of meaning or semantics (5). This second thought experience also challenges that human intelligence in the brain is not a computational process of information and data and that computers at best will simulate the biological process. This also raises questions further in the theory of language and the mind and the development of computer science and cognitive science and theories of consciousness (5).

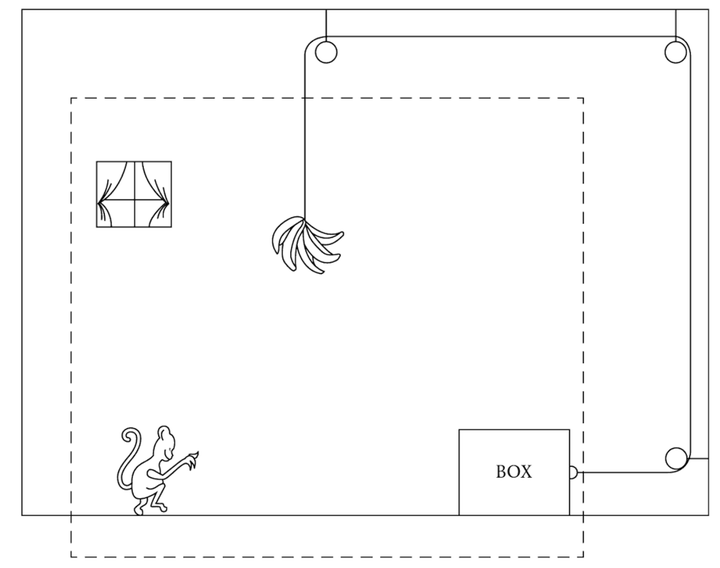

The Framing Problem - The Monkey and the Bananas

In both the imitation game and the Chinese room argument a similarity might be drawn in the ability to find appropriate knowledge to a situation that is through some sort of exchange of data and translation into actions. But as described in the many self-driving car situations, this is an example of unstructured and undefined situations that the vehicle must sense and make decisions to respond to plan and unplanned events. This points to a wider issue in robotics and artificial intelligence described in the “framing problem” that is identifying an adequate set of data that represents a situation and environment. Margaret Boden described this as the Monkey and Banana Problem in her recent book (6) but related back to the scenario from her paper, Artificial Intelligence and Natural Man, in 1977 (7). The question “how does the monkey get the banana?” shown in figure x, illustrates the notion of the world view of the monkey dented by the dotted box is limited but its assumption that it’s immediate environment is static and does not change. In other words, nothing exists outside the this frame that causes significant changes in it on the moving the box. (6)

Figure x The Monkey and bananas problem. Reprinting from M.A. Boden, Artificial Intelligence and Natural Man (1977:387) MIT Press

The origins of the “Framing problem” was defined by John McCarthy and Patrick J. Hayes in their 1969 article, Some Philosophical Problems from the Standpoint of Artificial Intelligence (8). This described framing as a mathematical issue with using first-order logic (FOL) to express facts about a robot in the world. The use of specific quantified variables to describe simple logic facts was limited but not being able to change variables to expand the description of that environment. Hayes compared it to the human brain as a problem of finding adequate collections of axioms ( the analogy here is to knowledge) for a viable description of a robot environment (9). Boden says framing problems arrive because AI programs do not have a human’s sense of relevance, an awareness and knowledge to navigate the environment and problem space. This presupposes that a machine intelligence must have a model of all possible situations in order to navigate as event. Other fields such as multi-agents systems (MAS) that used collective intelligence are one example of how this may be evolving.

If all possible scenarios in an environment or problem context are known then the frame problem can be avoided (6). In specialized machine intelligence that are defined by a known set of parameters and scenarios this is the case. But is unstructured real world scenarios this is not the situation and why the framing problem is a challenge both in the immediate term in enabling specialized machine intelligence to adapt to more situations and the much longer horizon of artificial general intelligence (AGI).

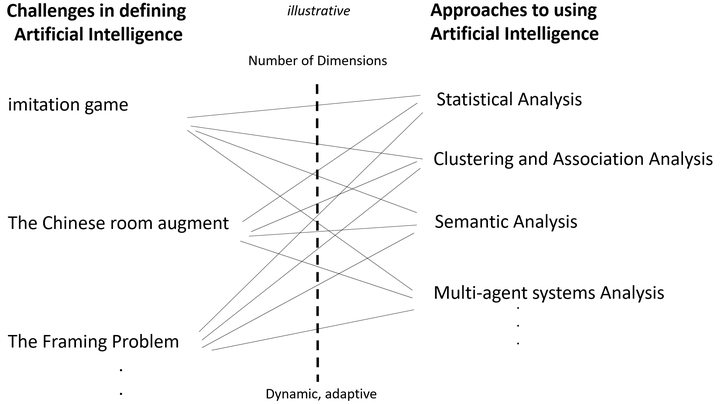

Dimensions and complexity

The framing problem also points towards another challenge in machine intelligence that is worth pointing out at a practitioner level in dimensionalizing the scope of a problem and solution. In machine learning this is a key technique in both defining algorithms and in neural networks in the types of variables and levels of the network. In a simple environment and problem space a few variables may be used to define the inputs, outputs and processing. In more complex situations that require more data and information to define the scenario problem then there are more dimensions to this model of computation.

Dimensional reduction in statistics and machine learning is the process of reducing the number of random variables under consideration to obtain a set of principle variables that define the key features. This might to compared to the human brain selecting focusing on information that is relevant to a situation and filtering out subconsciously or consciously what is not important to a conscious task. But in machine learning, dimensionalizing also refer to clustering and matching processes of data and many other techniques to treat data into training data that can generate a model for useful intelligence. Dimensional analysis can also run into many higher-dimensions when organizing and analyzing data in what some refer to the “curse of dimensionality”. With increasing dimensions comes further complexity that can include changing data conditions in dynamic systems and adaptive control systems for machine learning systems. In structured automation problems the number of dimensions can be minimized for specialized machine learning. However dimensions increase and additional complexity arise as unstructured data and unplanned events become part of the frame space found in real-world situations. The specialized AI moves into multiple scenarios and broadening its approach represents one frontier of current AI research for adaptive systems. Understanding current frame spaces and AI solutions is part of this book exploration.

Reflections of Challenges and approaches to AI

Alan Turing in 1950 said that sensors (to collect data) would be critical to enabling the “thinking machine”. Whether the limits to achieving this are in getting the right data to frame an environment or the need for faster more powerful computers to process ever larger and complex models; or improving programming languages to be able to define the model of intelligence are all part of the journey. The limits of current machine learning are defined by these questions but we also see a constantly improving and fast moving field central to shaping the 4th Industrial Revolution.

Some recent reflections by two pioneers in computing illustrate different but expanding approaches related to artificial intelligence.

Defining Models of computation and Human-machine symbiosis

Carl Hewitt a computer scientists who invented the Planner programming language (10) (see figure x) recently commented “that we will have intelligent applications but not have, for now, artificial intelligence. Which means the name of the game now is collaborating with humans to make more intelligent systems. The computer can not do it by themselves in that respect Licklider, (a researcher from MIT in 1960), was absolutely correct, what psychologists call “man-machine symbiosis” (11), is now called collaboration. Effective, intelligent applications must have intense collaboration with humans or be ineffective. What that means in practice is that we will have “computer-assisted driving for our cars” for a long time, at least a decade. We will not have “computer only driven cars” because we can’t do it.” (12)

Hewitt develops this argument with a reference to the notion of focusing on models of computation rather than just the programming language itself. This can be extrapolated to a wider point of focusing on the framing of the task and problem space in the design of machine learning and artificial intelligence.

“What we have is the need to distinguish between programming language and models of computation.“

“Why bother with a model of computation?“

“A model of computation enables you to reason about whole classes of systems. If I came up with something that you could not implement in ( a programing language) it is not saying that a whole class of things can not be done with that programming language. It may be possible to tweak the programming language so it could do it.” (12)

Quantum computing and neuromorphic computing

Carver Mead, the Computer Scientist, inventor very large-scale integration (VLSI) silicon chip design also coined the phrase “Moore’s Law” helping to popularize Gordon Moore’s 1965 observation that the number of transistors on an integrated circuit doubles about every 24 months. Mead detailed why it’s important for computer engineers to explore new forms of computing as computational power is needed to meet the increasing demands of computation and the limits of conventional silicon in Moore’s law now within sight (13). Mead concluded that research into fields such as quantum computing using quantum superposition and entanglement to create qubits of data for quantum data operations (14) and neuromorphic computing that seek to learn from biological information processing approaches would be needed to progress (15).

Summary

From a general practitioner point of view is it is clear that artificial intelligence is still evolving and that new theories and models to create training data to be able to pursue meaningful and useful machine intelligence. Whether self-driving cars will be truly computer only or whether it will need human assistance is certainly open to debate. It is grounded in the notion that defining artificial intelligence from imitation of human tasks to more complex human and non-human tasks will be driven by the quality of models of computation. Clearly, specialized machine intelligence has made huge strides in the recent decades. While historically the expectation of artificial intelligence has been repeatedly exaggerated, it is the evolution of computing languages and systems models with sensors and data that will continue to address challenges in defining AI and approaches to using AI effectively.

Forthcoming book: The Fourth Industrial Revolution: An Executive Guide to Intelligent Systems, 2017 Palgrave Macmillan. Professor Mark Skilton. Dr Felix Hovespian.

1. Turing, A.M. (1950). Computing machinery and intelligence. Mind, 59, 433-460.

2. Turing test marks milestone in computing history, 8 June 2014 http://www.reading.ac.uk/news-and-events/releases/PR583836.aspx

3. Schank, R.C. & Abelson, R. (1977). Scripts, Plans, Goals, and Understanding: : An Inquiry Into Human Knowledge Structures (Artificial Intelligence) Hillsdale , NJ: Earlbaum Assoc.

4. Searle, John (1980), "Minds, Brains and Programs", Behavioral and Brain Sciences, 3 (3): 417–457, doi:10.1017/S0140525X00005756

5. The Chinese Room Argument, Apr 9, 2014, Stanford Encyclopedia of Philosophy https://plato.stanford.edu/entries/chinese-room/#4.2

6. Margaret A. Boden, “AI: Its nature and Future” 26 May 2016 , Oxford University Press, Oxford; 01 edition

7. M.A. Boden, Artificial Intelligence and Natural Man (1977:387) MIT Press

8. J. McCarthy, P.J. Hayes, Some philosophical problems from the standpoint of artificial intelligence, B. Meltzer, D. Michie (Eds.) (2nd ed.), Machine Intelligence, 4, Edinburgh University Press, Edinburgh (1969), pp. 463-502

9. Hayes, Patrick. "The Frame Problem and Related Problems in Artificial Intelligence" 1971, Standford Computer Department. Report No. CS-242. Stanford Artificial Intelligence Project Memo AIM-153

10. Carl Hewitt. PLANNER: A Language for Manipulating Models and Proving Theorems in a Robot. IJCAI. 1970-08-01 http://hdl.handle.net/1721.1/6171

11. J. C. R. Licklider. Man-Computer Symbiosis, IRE Transactions on Human Factors in Electronics, volume HFE-1, pages 4-11, March 1960. MIT Computer Science and Artificial Intelligence Laboratory

12. Concurrency and Strong Types for Iot - Carl Hewitt, May 24 2017 Erlang & Elixir Factory SF 2017 https://www.youtube.com/watch?v=dvc3OKJ-dTk&feature=youtu.be&t=34m59s

13. Three Questions for Computing Pioneer Carver Mead , MIT Technology Review November 13, 2013 https://www.technologyreview.com/s/521501/three-questions-for-computing-pioneer-carver-mead/

14. Neuromorphic Electronic Systems, Carver Mead , Proceedings of the IEEE, Vol, 78, No. 10, October 1990. https://web.stanford.edu/group/brainsinsilicon/documents/MeadNeuroMorphElectro.pdf